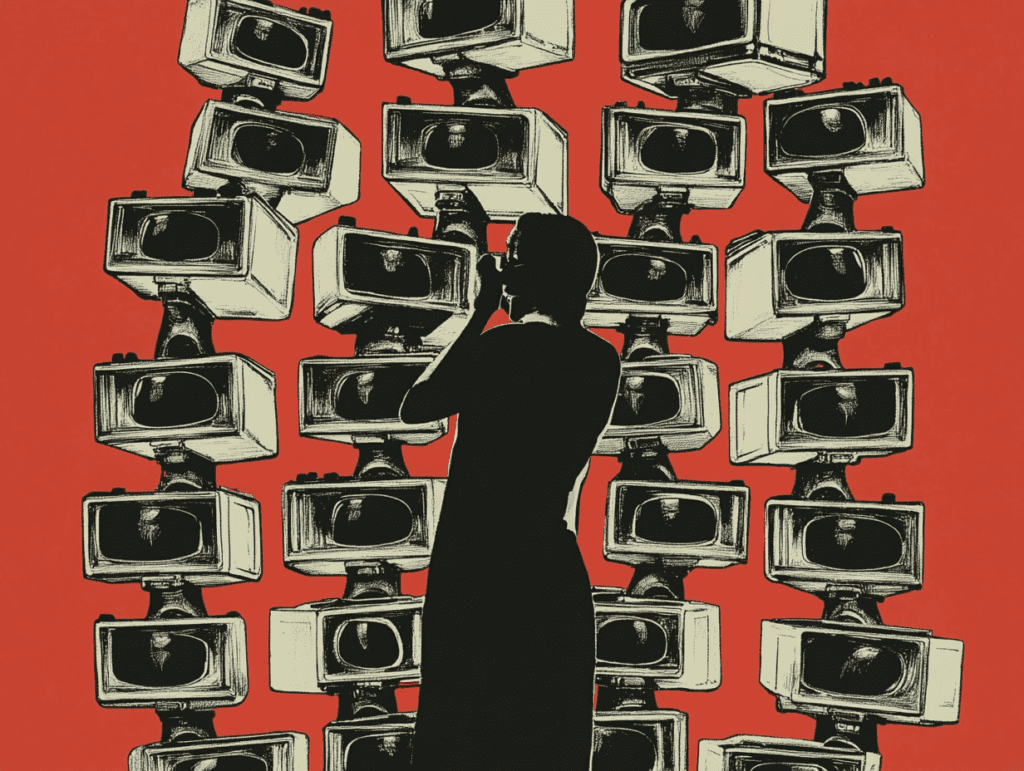

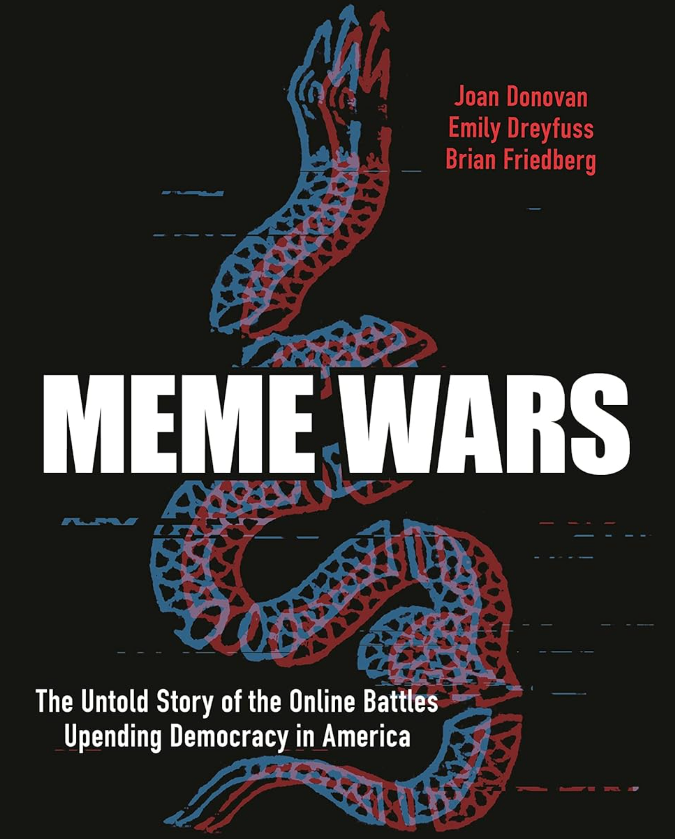

Meme Wars: How Digital Culture Became a Weapon Against Democracy

In their groundbreaking book “Meme Wars: The Untold Story of the Online Battles Upending Democracy in America,” researchers Joan Donovan, Emily Dreyfuss, and Brian Friedberg offer a chilling examination of how internet culture has been weaponized to undermine democratic institutions. Far from being a distant academic analysis, this book serves as an urgent warning about the very real dangers facing our democracy in the digital age.

When Internet Jokes Become Political Weapons

Remember when memes were just harmless internet jokes? Those days are long gone. “Meme Wars” meticulously documents how these seemingly innocent cultural artifacts have evolved into powerful weapons in a coordinated assault on American democracy — a form of information warfare that tears at our very ability to detect fantasy from reality at all, something that Hannah Arendt once warned of as a key tool of authoritarian regimes.

What makes this transformation particularly insidious is how easy it is to dismiss. After all, how could crudely drawn frogs and joke images possibly be a threat to democracy? Yet the authors convincingly demonstrate that this dismissive attitude is precisely what has allowed far-right operatives to wield memes so effectively.

The book reveals how figures like Alex Jones, Milo Yiannopoulos, Nick Fuentes, and Roger Stone have mastered the art of meme warfare. These digital provocateurs understand something that traditional political institutions have been slow to grasp: in today’s media environment, viral content can bypass established gatekeepers and directly shape public opinion at scale.

The Digital Radicalization Pipeline

Perhaps the most disturbing aspect of “Meme Wars” is its detailed examination of what the authors call the “redpill right” and their techniques for radicalizing ordinary Americans. The process begins innocuously enough—a provocative meme shared by a friend, a YouTube video recommended by an algorithm—but can quickly lead vulnerable individuals down increasingly extreme ideological paths.

This digital radicalization operates through sophisticated emotional manipulation. Content is carefully crafted to trigger outrage, fear, or a sense of belonging to an in-group that possesses hidden truths. Over time, these digital breadcrumbs lead users into alternative information ecosystems that gradually reshape their perception of political reality.

From Online Conspiracy to Capitol Insurrection

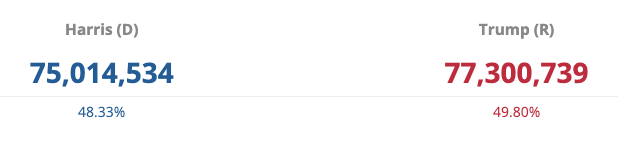

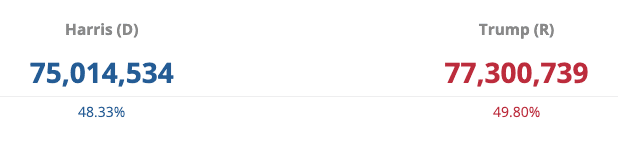

“Meme Wars” provides what may be the most comprehensive account to date of how online conspiracy theories materialized into physical violence on January 6th, 2021. The authors trace the evolution of the “Stop the Steal” movement from fringe online forums to mainstream platforms, showing how digital organizing translated into real-world action.

The book presents the Capitol insurrection as the logical culmination of years of digital warfare. Participants like “Elizabeth from Knoxville” exemplify this new reality—simultaneously acting as insurrectionists and content creators, live-streaming their participation for online audiences even as they engaged in an attempt to overthrow democratic processes.

This fusion of digital performance and physical violence represents something genuinely new and dangerous in American politics. The insurrectionists weren’t just attacking the Capitol; they were creating content designed to inspire others to join their cause.

Inside the Digital War Rooms

What sets “Meme Wars” apart from other analyses of digital extremism is the unprecedented access the authors gained to the online spaces where anti-establishment actors develop their strategies. These digital war rooms function as laboratories where messaging is crafted, tested, and refined before being deployed more broadly.

The authors document how these spaces identify potential recruits, gradually expose them to increasingly extreme content, and eventually mobilize them toward political action. This sophisticated recruitment pipeline has proven remarkably effective at growing extremist movements and providing them with dedicated foot soldiers.

The Existential Threat to Democracy

At its core, “Meme Wars” is a book about the fundamental challenge digital manipulation poses to democratic governance. By deliberately stirring strong emotions and deepening partisan divides, meme warfare undermines the rational discourse and shared reality necessary for democratic deliberation.

The authors make a compelling case that these tactics represent an existential threat to American democracy. What’s more, the digital warfare techniques developed in American contexts are already being exported globally, representing a worldwide challenge to democratic institutions.

Confronting the Challenge

Perhaps the most important contribution of “Meme Wars” is its insistence that we recognize digital threats as real-world dangers. For too long, online extremism has been dismissed as merely virtual—something separate from “real” politics. The events of January 6th definitively shattered that illusion.

While the book doesn’t offer easy solutions, it makes clear that protecting democracy in the digital age will require new approaches from institutions, platforms, and citizens alike. We need digital literacy that goes beyond spotting fake news to understanding how emotional manipulation operates online. We need platforms that prioritize democratic values over engagement metrics. And we need institutions that can effectively counter extremist narratives without amplifying them.

A Must-Read for Democracy’s Defenders

“Meme Wars” is not just a political thriller, though it certainly reads like one at times. It is a rigorously researched warning about how extremist movements are reshaping American culture and politics through digital means. For anyone concerned with the preservation of democratic institutions, it should be considered essential reading.

The authors — including Joan Donovan, widely known and respected as a foremost scholar on disinformation — have performed a valuable service by illuminating the hidden mechanics of digital manipulation. Now it’s up to all of us to heed their warning and work to build democratic resilience in the digital age. The future of our democracy may depend on it.